Augmented reality (AR): A term for a live direct or indirect view of a physical real-world environment whose elements are merged with or augmented by virtual computer-generated imagery… (Wikipedia)

With the release of the iPhone 3GS and OS 3.1, apps that incorporate “augmented reality” are big news these days. However, AR has been around for a while, and a simple AR app for the iPhone 3G was introduced in October of 2008. The first version of iHUD ($5.99; i-hud.com) turns the screen into a heads-up display with a simple moving horizon. Using the 3G’s built-in accelerometer and GPS capability, iHUD shows you the direction you’re traveling along with your speed and altitude. As your position and speed changes, real-world information is overlaid on iHUD’s virtual display.

With the release of the iPhone 3GS and OS 3.1, apps that incorporate “augmented reality” are big news these days. However, AR has been around for a while, and a simple AR app for the iPhone 3G was introduced in October of 2008. The first version of iHUD ($5.99; i-hud.com) turns the screen into a heads-up display with a simple moving horizon. Using the 3G’s built-in accelerometer and GPS capability, iHUD shows you the direction you’re traveling along with your speed and altitude. As your position and speed changes, real-world information is overlaid on iHUD’s virtual display.

iHUD turns the screen into a heads-up display with a moving horizon

For this article, let’s refine the definition of AR a bit more, and require that the virtual information displayed by an AR app must contain a spatial component that is dependent upon the view in question. In other words, as the view of the real world we see on the iPhone screen changes, the virtual information displayed on the screen should change accordingly and relate accurately to what we see. This makes it a bit harder to incorporate AR into an app, but it opens up even more interesting possibilities.

iPhone OS 3.0 and the 3GS make robust AR possible

The iPhone is the not the first commercial mobile device to display AR capabilities—that honor is shared by the Android phone and the Nokia N97. However, both of those devices lack the video capabilities of the iPhone 3GS, so even though we have seen some great AR apps on those platforms, I’m hopeful that we’ll see even more ambitious projects for the iPhone. (Note that the iPhone 3G is not as well suited to AR tasks because it lacks a compass sensor and a camera with high enough resolution and refresh rate.) In order to employ AR, a mobile device should support four key functions:

- A compass sensor with 0.1 deg of resolution accuracy or better.

- Sufficiently high accuracy GPS

- Access to geo resolved data accurate enough to align with what the user sees on the display.

- A high enough quality video camera that can detect necessary details in the scene as well as maintain sufficient refresh to track and measure movement in the scene. (Not required, but desired as we will see later)

The unfortunate truth is, if any of the four elements listed above is insufficient, the user experience degrades quickly. For example, if the alignment of the virtual content to reality is off by even a small degree, the AR experience could be worthless, or worse, provide false information. This could turn out to be a major concern, especially since I have experienced my fair share of connection problems and GPS errors as I travel around the San Francisco bay area.

There is a solution, but it’s not an easy one to implement. Developers could incorporate a type of OCR (Object Character Recognition) software to analyze video “seen” by the iPhone’s camera to confirm or correct positions. For example, the user could point the iPhone at the local Starbucks store and the AR app would “read” the sign and confirm the location. Or a navigation program could “see” road signs or even the lines in the road to better resolve driving information. Using video recognition as a geospatial input could be the fifth element needed to create robust AR applications for mobile devices with less than perfect GPS tracking. Real-time video based OCR is already functioning on the iPhone in various test applications, so this capability is coming sooner than you think.

Augmented Reality apps

The number of apps incorporating some form of augmented reality is growing and we expect to see the technology incorporated in future apps in new and innovative ways. Let’s take a closer look at three apps that demonstrate the potential of AR as it is currently implemented.

AR solutions in development

Other augmented reality solutions are currently in development. For example, Augmented ID (tat.se) uses facial recognition capability to identify and display information about the people around you. Nothing sinister about this one; the people you are trying to identify have to set up an account and give permission for information to be displayed. Another interesting R&D project is demoed on YouTube.com (search on 7zE9_zGw878). The video shows iPhone camera “looking at” three cards, each with a picture of a different animal. The app recognizes the animal and displays a 3D representation of it superimposed over the picture on the screen.

The future of Augmented Reality apps

In spite of the iPhone’s current limitations, we are going to see many more AR apps for the iPhone. At first, we’ll see a lot of GPS discovery apps that provide directions, augment subway maps, or identify landmarks. But if smart programmers integrate some form of video geospatial recognition, AR will be taken to the next level and we’ll see a bevy of new apps with enhanced capabilities. For example, we might see…

- an app that lets you play cards with your friends by displaying virtual cards on a table;

- an app that displays GPS data (speed, moving map, turns, etc.) on the screen matched to what is seen on the road;

- an app that displays prices and additional information about products as you view them via the camera in the store;

- an app with facial or badge ID recognition capability that displays info about the person;

- an app that displays a virtual image of the finished building when you walk by a building site;

- an app that turns your iPhone into a virtual pet dog that can detect the ground and objects.

The first draft of this article was written in September of last year, but we decided to hold it over to this issue because AR was evolving rapidly and we wanted to incorporate information about new AR apps we expected to be released towards the end of the year.

I discussed the Layar Reality Browser above, and version 3.0 of the app was undergoing the Apple approval process as of 12/28/09. Earlier in the article I discussed Reality Browser’s ability to display information about a building when you pointed the iPhone’s camera at it. As described on Layar’s Web site (layar.com), the new version of the app takes this one step further. You can now point your camera at a building that is under construction and get more details about the building. For example, MVRDV has designed and is constructing “Market Hall,” a unique U-shaped building that will be completed in 2014. If you’re touring Rotterdam and point your camera at the construction site, Reality Browser displays a graphical representation of the completed building and lets you access more information about it.

I discussed the Layar Reality Browser above, and version 3.0 of the app was undergoing the Apple approval process as of 12/28/09. Earlier in the article I discussed Reality Browser’s ability to display information about a building when you pointed the iPhone’s camera at it. As described on Layar’s Web site (layar.com), the new version of the app takes this one step further. You can now point your camera at a building that is under construction and get more details about the building. For example, MVRDV has designed and is constructing “Market Hall,” a unique U-shaped building that will be completed in 2014. If you’re touring Rotterdam and point your camera at the construction site, Reality Browser displays a graphical representation of the completed building and lets you access more information about it.

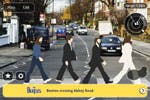

Reality Browser 3.0 displaying the construction site of Market Hall (left) and the famous Abby Road crosswalk (right).

Layar’s Web site also has a screenshot of Reality Browser 3.0 viewing the Abby Road crosswalk with the images of the Beatles superimposed on the screen. This is part of the “Beatles Discover Tour” which covers 42 locations in London. The renderings of the building and the Beatles are not that great, but in time the rendering software and the graphics capabilities of the iPhone will improve.

Layar’s Web site also has a screenshot of Reality Browser 3.0 viewing the Abby Road crosswalk with the images of the Beatles superimposed on the screen. This is part of the “Beatles Discover Tour” which covers 42 locations in London. The renderings of the building and the Beatles are not that great, but in time the rendering software and the graphics capabilities of the iPhone will improve.

The “Beatles” example demonstrated a whole class of AR possibilities I hadn’t considered—historic AR. How about pointing your camera at a site and seeing an historic building that was there in the past but no longer exists? How about viewing a battlefield from the Napoleonic Wars and seeing accurate images of the men that fought there? How about viewing a field or garden and seeing how it looks in different seasons? There are a lot of possibilities here.

Remember iHud? The app I started the article with? Well, between the first and final draft, the developer updated the app. As you can see in this screenshot, that app now displays the iHud information over the real-time image of what the camera is viewing. I do not think it’s doing this to improve accuracy, but it gives you an idea of just how fast things are moving.

Remember iHud? The app I started the article with? Well, between the first and final draft, the developer updated the app. As you can see in this screenshot, that app now displays the iHud information over the real-time image of what the camera is viewing. I do not think it’s doing this to improve accuracy, but it gives you an idea of just how fast things are moving.

The latest version of iHud

Additional AR Apps

In addition to the apps mentioned in the rest of the article, you’ll find these AR programs in the App Store. All require devices with the iPhone OS 3.1 installed. Most of these apps also require the capabilities of the iPhone 3GS.

Car Finder ($0.99; intridea.com) is an AR app that uses the GPS and compass to note the location of your parked car and help you find your way back to it.

Cyclopedia ($1.99; chemicalwedding.tv/cyclopedia.html) overlays information from Wikipedia relating to your current location and where your camera is pointed.

DishPointer Augmented Reality ($9.99; dishpointer.com) displays the real-time location of satellites when you point your iPhone’s camera at the sky.

Lodestone AR Compass ($1.99; dovevalleyapps.com/dva/lodestone) projects a detailed compass over a live view from your camera.

London Tube (Free; presselite.com/iphone/londontube) is a guide to the London subway system that displays the location of and directions to the nearest station via map or on a live photo of your current location.

Metro Paris Subway ($0.99; presselite.com/iphone/metroparis/index_en.html) is a guide to the Paris subway system with features similar to London Tube (above).

Nearest Tube ($1.99; acrossair.com) displays the nearest London subway station on the iPhone’s camera screen.

Nearest Tube ($1.99; acrossair.com) displays the nearest London subway station on the iPhone’s camera screen.

NearestWiki ($1.99; acrossair.com) displays information about your current location anywhere in the world on the iPhone’s camera screen

Pocket Universe: Virtual Sky Astronomy ($2.99; craic design.com) includes a “virtual sky” mode that displays astronomical information about the part of the sky your camera is pointed at.

Washington Metro ($0.99; presselite.com/iphone/ washingtonmetro) is a guide to the Washington, D.C. subway system with features similar to London Tube (above).

WorkSnug (Free; worksnug.com) displays information about the best places to get work done based on where you are and what your camera is pointing at. Initially available for London; it will expand to other major cities.